Passport

PassportProof of Personhood Explained: How It Works, Who's Building It, and Why It Matters Now

Proof of Personhood Explained: How It Works, Who's Building It, and Why It Matters Now

Feb 26, 2026

What is Proof of Personhood?

Proof of personhood (PoP) is a way to verify that a digital identity belongs to a real, unique human, without forcing that person to reveal who they are. Two questions, answered simultaneously: Are you human? and Have you already registered?

Traditional identity verification (KYC) wants to know your name. Proof of personhood doesn't care about your name; it only cares whether you're a distinct person. No documents are handed over, no biometric databases built, no personal data exposed to third parties. The concept was first formalized in a 2017 paper by Borge et al. and has since been adopted as the standard framing by Vitalik Buterin, MIT and OpenAI, and Wikipedia.

There is no native identity layer on the internet. Nothing stops one person from creating a hundred accounts, a thousand wallets, a million bots. These are called Sybil attacks, and they've just become easier and more sophisticated than ever. Today's AI agents solve CAPTCHAs, maintain believable social profiles, carry on extended conversations, and operate at scale without human supervision.

Yet, humanity matters. A 2025 CoinGecko survey found that over 65% of crypto-native users consider the human-AI distinction online "very important." Proof of personhood is the mechanism built to draw that line. When the architecture is right, it does so without surveillance. Projects like Human Passport are already implementing this at scale.

The Terminology Problem

Multiple terms float around the space, often used interchangeably. Are they truly the same? Let’s unpack the most common terms:

Proof of personhood (PoP) is the term with academic and institutional backing. It describes a system where each unique human receives exactly one credential or participation right: persistent, reusable across applications, not a one-time gate. This is the language used across sources.

Proof of humanity (PoH) carries two meanings, which is why it creates confusion. It's the name of a specific Kleros-backed Ethereum registry using video submission and peer vouching. It's also used casually as shorthand for proof of personhood. A useful distinction some researchers draw: proof of humanity as a point-in-time check (like a liveness test), versus proof of personhood as an enduring credential that travels with you across platforms and sessions. Note: there is no universally accepted formal framework defining PoH.

Human verification is the umbrella: CAPTCHAs, phone checks, liveness detection, email confirmation. Any mechanism that asks "is a person doing this?" falls here. But human verification doesn't ask about uniqueness. Someone can clear a CAPTCHA a thousand times and spin up a thousand accounts each time. Proof of personhood closes that hole: one person, one credential, enforced cryptographically. Every proof of personhood system is a form of human verification. The reverse isn't true.

Proof of human is branding, not taxonomy. World (formerly Worldcoin) adopted it in their 2025 messaging, tying it specifically to their product.

Decentralized identity (DID) is the infrastructure layer: W3C Decentralized Identifiers, Verifiable Credentials, self-sovereign identity frameworks. It's the plumbing that lets people own and selectively share credentials without a central authority. Proof of personhood sits inside this infrastructure as its most fundamental application: before you can issue a credential for age, nationality, or professional standing, you need to know the holder is a real, unique person. Once personhood is established, everything else stacks on top, creating a composable identity stack that remains under the user's control.

How Proof of Personhood Works in Practice

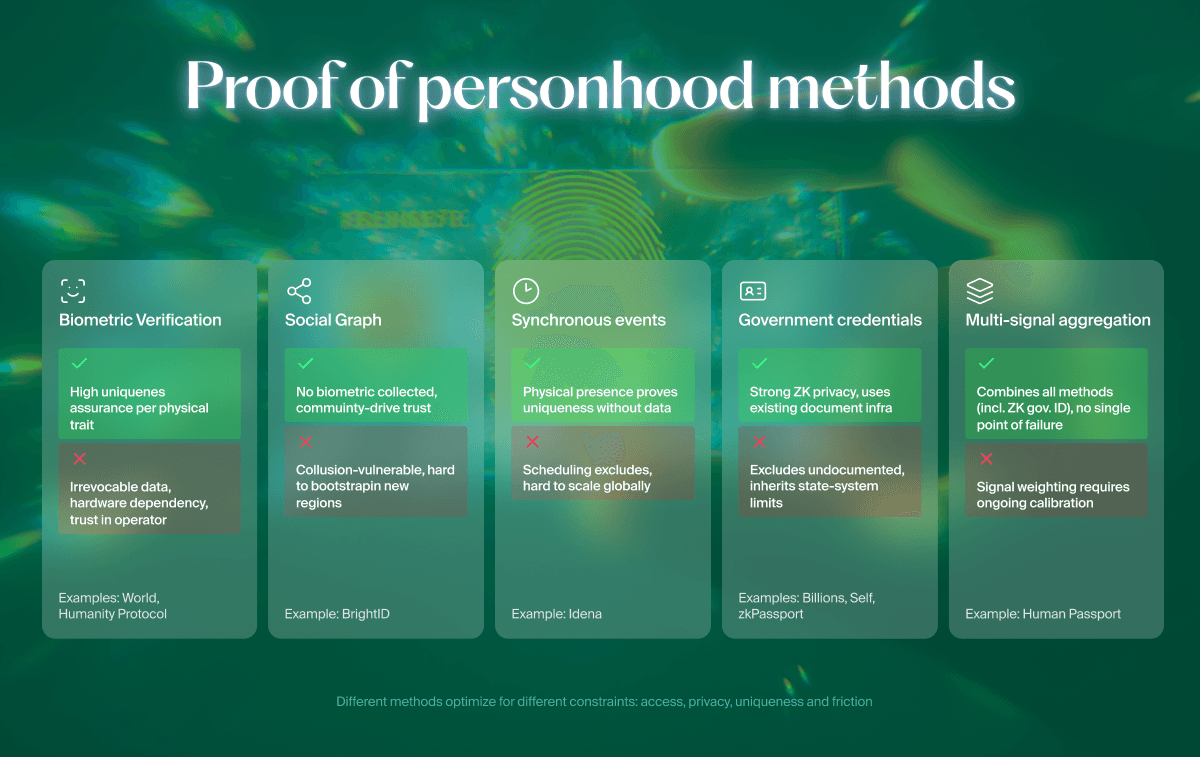

There is no single correct method. Every approach trades something for something else, and the systems that hold up best are the ones built on more than one bet.

Biometric Verification

Scans physical traits (iris patterns, facial geometry, fingerprints, palm prints) to establish identity and uniqueness.

What it gets right: Strong uniqueness guarantees. Difficult to fake at industrial scale.

What it gets wrong: You need specialized hardware, and you need to trust whoever runs it with data you can never change. You can't reset your iris the way you reset a password. And if the scanning device isn't in your city, or your country, you simply can't participate.

Social Graph Verification

People vouch for each other's humanness, building a web of mutual attestation. Algorithms analyze the graph to flag clusters of fake identities.

What it gets right: Fully decentralized. No biometric data touches anyone's servers. Community-driven by nature.

What it gets wrong: Coordinated collusion can game it. A small group creates fake identities that all vouch for each other. Bootstrapping in regions without existing crypto communities is hard. And graph analysis struggles with sophisticated small-scale attacks.

Synchronous Verification Events

Participants solve puzzles or attend sessions at a fixed time, exploiting the fact that one person can only be in one place at once.

What it gets right: Strong uniqueness proof without collecting personally identifying information (PII) like biometrics.

What it gets wrong: Scheduling excludes people. AI is getting better at solving the puzzles. Convenience is permanently sacrificed for security.

Government-Issued Credential Verification

Takes existing state IDs as the trust anchor, then wraps verification in zero-knowledge proofs so the document itself is never exposed.

What it gets right: Taps into globally deployed identity infrastructure with legal weight behind it.

What it gets wrong: If your country doesn't issue reliable documents, or doesn't issue them to you, you're excluded. Government ID-only approaches inherit every limitation of state-issued identity, excluding the stateless and anyone in a jurisdiction with unreliable civil registries.

Credential Aggregation (Multi-Signal Proof of Personhood)

Pulls together multiple independent identity signals (social account history, on-chain activity, government credentials via ZK, phone verification, biometric checks, community vouching) and scores them into a composite. No single signal is decisive.

What it gets right: No special hardware. Modular. Privacy-preserving. If one signal gets compromised, the rest still hold. Works for anyone with a phone and a connection.

What it gets wrong: Individual signals can be gamed in isolation. The system is only as good as the diversity and independence of its signal sources.

This is the architecture Vitalik Buterin endorsed when he concluded there is "no ideal form of proof of personhood." The strongest path combines methods rather than betting on one. The MIT/OpenAI Personhood Credentials paper reaches the same conclusion.

How Projects Compare

The proof of personhood ecosystem includes fundamentally different design philosophies, building on different methods explained in the previous section. The key differences are not only technical, but architectural, and they determine what user trade-offs: what they must trust and what they give up.

World (formerly Worldcoin) uses proprietary iris-scanning hardware ("Orb") for uniqueness verification. High uniqueness assurance, but users must physically visit an Orb location, scan their eyes, and trust that the resulting biometric data is handled correctly. Permanently. The project has faced regulatory action in multiple jurisdictions, including Kenya, Spain, Hong Kong, and Brazil, over data collection practices. As Vitalik Buterin noted, you cannot rotate your eyeballs. If the biometric hash is ever compromised, there is no reset.

Humanity Protocol uses palm recognition and markets itself as "privacy-preserving." However, its privacy policy tells a different story: it explicitly authorizes sharing personal data with "Authorities of competent jurisdiction (including governmental bodies, regulatory authorities, law enforcement agencies and courts and tribunals)" and permits third-party access to user data "for the purpose of training a machine learning model." The entity behind it, Human Institute Limited, is incorporated in the British Virgin Islands. Users should read the fine print before trusting a palm scan to a project whose legal terms permit broad government disclosure and ML training on personal data.

Privado ID / Billions Network uses NFC government ID scans with zero-knowledge proofs. Strong ZK implementation, but dependent on state-issued documents, which means it inherits every exclusion built into government identity systems, just like traditional KYC.

BrightID uses social graph verification. No biometrics collected, but requires an existing network to bootstrap, a significant barrier in regions without established crypto communities.

Self and zkPassport use NFC scans of biometric passport chips combined with zero-knowledge proofs to generate private attestations of age, nationality, and sanctions status. But the architecture depends entirely on government-issued biometric passports: no compatible passport, no proof. Chip-enabled passports are far from universal, and the UN estimates over 100 million people lack travel documents entirely.

Idena uses scheduled puzzle-solving ceremonies. Avoids biometrics entirely, but faces AI solvability questions as language models improve and scheduling constraints limit participation.

Human Passport aggregates multiple independent signals (social, on-chain, government credentials via ZK, biometrics optional) into a Unique Humanity Score without requiring proprietary hardware or a single point of trust. It’s arguably the most robust, requiring multiple sources to be compromised at once for it to stop working completely. The quality of the provided signals is of utmost importance here – weak signals are easy to game, lowering the overall cost of forgery for identities.

Personhood Credentials: The Emerging Standard

In 2024, a coalition of 32 researchers from MIT, OpenAI, Microsoft, Harvard, a16z crypto, and the Decentralized Identity Foundation published a formal framework for personhood credentials (PHCs). The paper went on to win a Future of Privacy Forum award.

The idea: a credential that proves you're a real person (not a bot, not an agent, not a duplicate) while revealing absolutely nothing else about you. Three design requirements define it:

- One credential per person per issuer: preventing duplicate accounts

- Unlinkable pseudonymity: usage cannot be traced across services, even if issuers and service providers collude

- Minimal disclosure: verification reveals nothing beyond "this person holds a valid credential"

Personhood credentials can be understood as the formalized, privacy-maximizing implementation of proof of personhood. The paper is explicit that the concept grew out of proof-of-personhood work that started in blockchain communities.

Why the Industry Is Moving Toward Multi-Signal Architectures

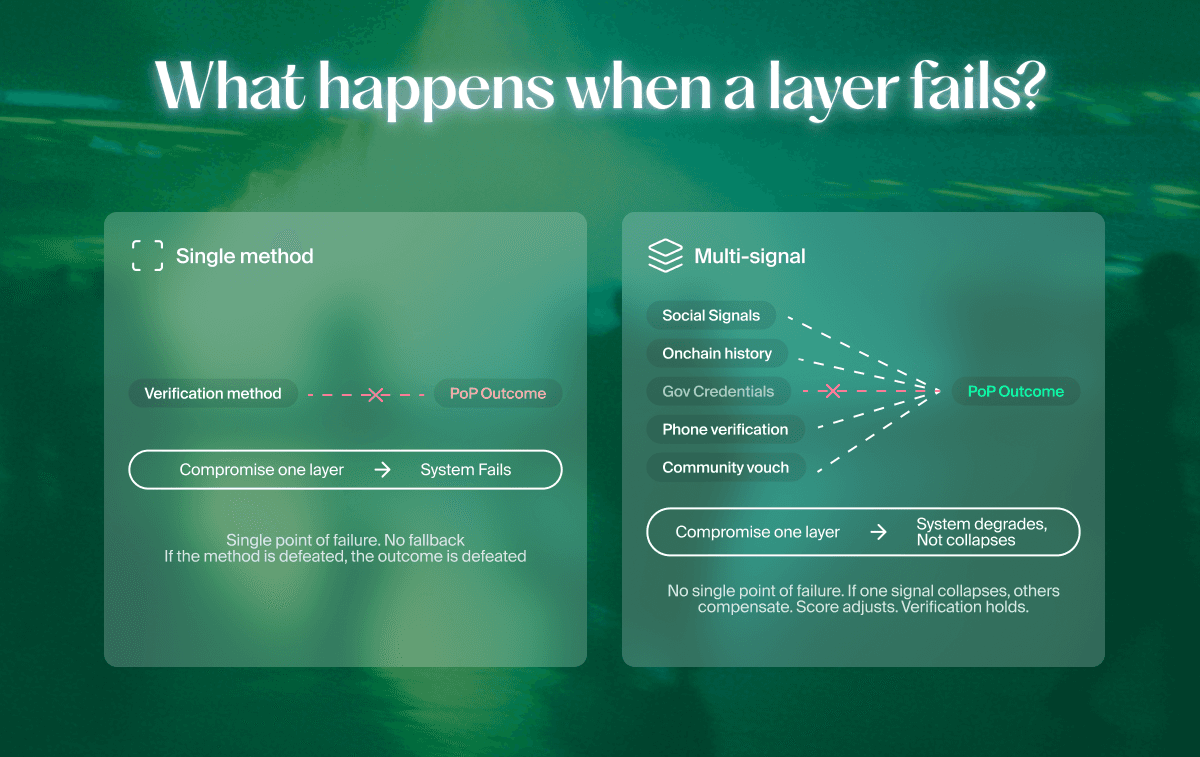

Every single-method system carries a structural weakness.

Biometric-only systems create hardware dependencies and irreversible data risk. You can't replace your eyes. Social-graph-only systems are vulnerable to coordinated agreements. Ceremony-based systems exclude if you can’t attend at the specified time. Government-credential-only systems inherit state-system constraints and exclude undocumented populations. And, some of those verifications can simply be bought.

The emerging consensus is that combining multiple independent verification signals is more robust than trusting any single one.

Multi-signal credential aggregation addresses this by design. If one verification layer is compromised, the system degrades gracefully rather than failing completely. No single device, biometric, or data source becomes a definitive point of failure. See: Building Sybil Resistance Using Cost of Forgery

What Proof of Personhood Prevents?

Every system that distributes value, influence, or access based on participation is vulnerable to the same exploit: fake participants. Proof of personhood is the countermeasure.

What Is a Sybil Attack?

One person, many masks. A Sybil attack is what happens when a single actor spins up fake identities to tilt a system in their favor – a problem well known to any web3 system, but definitely not limited to it. The term was originally coined by Brian Zill, and initially discussed in a 2002 Microsoft Research paper by John R. Douceur, who referenced the 1973 book “Sybil”, which portrayed a single individual presenting multiple identities. Sybil attacks can take the form of multi-wallet farming in token distributions, coordinated voting manipulation in DAOs, fake contributor networks inflating quadratic funding matches, bot-driven liquidity mining, referral abuse, or synthetic engagement on social platforms.

Web3 is a lucrative space for Sybils: thousands of wallets farming a single airdrop, fake accounts swinging DAO votes, bots draining liquidity mining rewards, manufactured contributors inflating quadratic funding matches. Hundreds of millions of dollars have been lost to Sybil-farmed distributions.

Proof of personhood attacks the problem at its root: verifying that each participant is a distinct human, rather than erecting economic barriers (staking) or computational ones (proof of work) that anyone with enough capital can vault over.

Use Cases: Where PoP Is Already Running

Token Sales and Airdrops

Ensure fair distribution by verifying each recipient is a unique human. Prevents the multi-wallet farming that has plagued virtually every major airdrop.

DAO Governance

Enable one-person-one-vote systems instead of plutocratic token-weighted governance. Essential for legitimacy in community decision-making. Without proof of personhood, "one token one vote" means whales decide everything.

Quadratic Funding

Protect matching pools from Sybil manipulation. Gitcoin Grants has used proof of personhood infrastructure since its early rounds to defend against coordinated fake contributors. Human Passport (originally Gitcoin Passport) was built specifically to solve this problem.

AI Agent Authentication

As AI agents increasingly act on behalf of humans (browsing, transacting, voting), proof of personhood becomes the mechanism to verify that an AI agent has a real human principal. This use case is growing rapidly as major tech companies ship autonomous agents. A multi-signal approach is particularly well-suited here because it can verify the human behind the agent without requiring the agent itself to pass a biometric scan.

Social Platforms

Distinguish human-posted content from AI-generated content. OpenAI has explored building a "humans-only" social network that would require proof of personhood for participation.

Reputation Systems

Anchor onchain reputation to verified unique humans, preventing reputation farming across duplicate accounts.

Pluralistic, modular Proof of Personhood: The Human Passport case

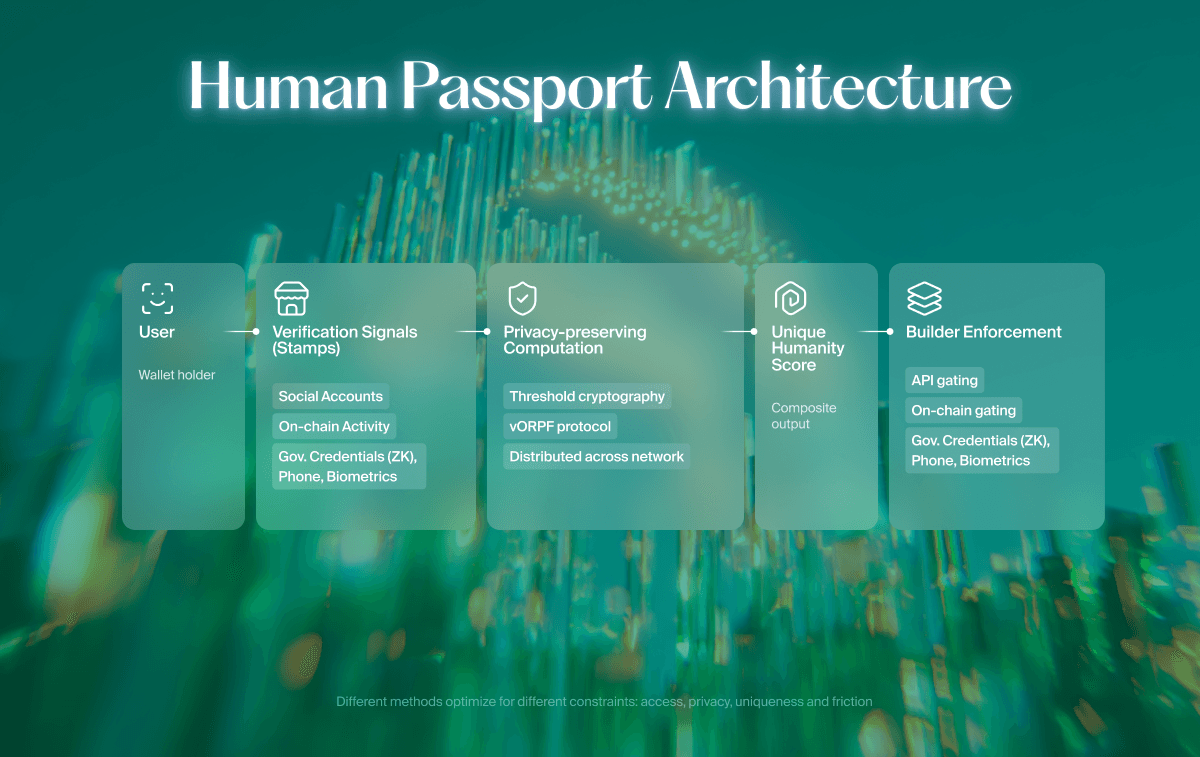

Human Passport (previously Gitcoin Passport) implements this through a Unique Humanity Score, combining independent verification stamps. The cryptographic layer relies on verifiable oblivious pseudorandom functions (vOPRFs) computed across a decentralized threshold network, ensuring no single entity can reconstruct underlying data. It also supports selective zero-knowledge verification across Government ID, Biometrics, Phone Verification, and Proof of Clean Hands Stamps, without storing documents.

Passport's architecture maps directly to the principles of personhood credentials (PHCs), the framework established in 2024 by a16z crypto, MIT, Decentralized Identity Foundation, and others, described in one of the earlier sections of this article: aggregated credentials, privacy-preserving verification, and no central entity that can trace user activity across applications.

This isn't theoretical. Human Passport has protected over $512M in value across token distributions, airdrop campaigns, and governance mechanisms, and over 2.3M Passports have been created, making its pluralistic proof of personhood one of the top industry leaders and a definitive winner in the multi-modal signal category.

Case study:

- Story Protocol: How Human Passport Protected Story’s Airdrop from Sybil Attacks

- Lido Finance: Privacy-Preserving Proof of Humanity for Community Stakers

- Optimism: Human Passport Helps Secure Optimism’s Citizens’ House

- Gitcoin Grants: Defending GG23 with Model-Based Sybil Detection

For Builders: Implementing Proof of Personhood with Passport

Passport aggregates modular verification signals into a privacy-preserving Unique Humanity Score and provides independent model-based Sybil detection. No proprietary hardware required.

1. Choose your verification surface

- Passport App: Redirect users to complete verification in the Passport app, then return to your product.

- Passport Embed: Integrate the verification flow directly into your frontend using the embeddable component.

Use Embed when you want a seamless UX inside your product.

Use the App only when the speed of integration is critical.

2. Choose your enforcement model

- API gating (offchain): Query Passport before allowing a claim, vote, or allocation.

- Onchain gating: Verify eligibility directly in your smart contract for minting, voting, or token distribution.

3. Set your recommended threshold

Passport exposes two distinct signals:

-

Unique Humanity Score (stamp-based aggregation)

→ Recommended threshold: 20

-

Model-based detection score (1–100 scale)

→ Recommended threshold: 50

A score of 20 (Unique Humanity) and 50 (Model) are the calibrated thresholds most production integrations use. Users do not need to exceed them by a huge margin.

4. Monitor and adjust if necessary

Track pass rates, distribution quality, and suspicious clustering. Adjust parameters if your threat model changes.

→ Integration resources: docs.passport.xyz

Where Proof of Personhood Goes

Proof of personhood is the base layer that everything else in decentralized identity ultimately depends on. Before you can issue a verifiable credential for someone's age, nationality, or professional qualifications, you need to establish that the credential holder is a real, unique human. That's the problem proof of personhood solves.

The architecture debate is settling on modular systems: no single method is sufficient. The projects that will define this space are the ones building modular systems that combine multiple independent verification signals, preserve privacy by cryptographic design rather than policy promise, and remain accessible without requiring specialized hardware or in-person ceremonies.

The question is no longer whether proof of personhood matters. It's which implementation gets it right at scale.

Frequently Asked Questions

Is proof of personhood the same as KYC?

Different questions, different architectures. KYC demands identifying documents to answer "who are you?" Proof of personhood uses privacy-preserving proofs to answer "are you a unique human?" without learning anything else. The two can work together, but they solve fundamentally different problems.

Does proof of personhood require scanning my iris or face?

No. That's one implementation, not a requirement of the concept. Multi-signal aggregation achieves the same goal by stacking independent verification signals, with no biometric data collection necessary. Human Passport computes a Unique Humanity Score from diverse credential sources without ever storing biometric data.

Does proof of personhood require biometrics?

It can include them, but doesn't have to. Biometric-only approaches demand specialized hardware and concentrate trust in a single operator. More resilient architectures layer multiple independent methods (social signals, on-chain history, government credentials via ZK) so no single entity or signal type becomes the system's weak link.

How is proof of personhood different from human verification?

Human verification asks "is a person doing this?" CAPTCHAs, liveness checks, phone codes. Proof of personhood asks the harder question: "is this person unique?" The first stops bots. The second stops one person from being ten people. Every PoP system is a form of human verification; almost no human verification system is proof of personhood.

Is Humanity Protocol actually privacy-preserving?

Their marketing says yes. Their privacy policy says they can share personal data with government authorities, regulators, and law enforcement, and that third parties can access user data for ML model training. The parent entity is registered in the British Virgin Islands. Privacy-by-policy and privacy-by-cryptography are not the same thing. Read the terms and decide for yourself.

How is Human Passport different from World?

World's architecture depends on a proprietary hardware device (the Orb), a single biometric modality (iris), and a single operator holding that data. Human Passport's architecture depends on none of those things. It aggregates signals from multiple independent sources into a Unique Humanity Score using threshold cryptography. No single device. No single biometric. No single point of trust.

Can AI break proof of personhood?

It already breaks older methods: CAPTCHAs, basic liveness detection, knowledge-based questions. Systems built around multi-signal aggregation are harder to crack because defeating one layer doesn't defeat the others. Single-method approaches carry significantly higher obsolescence risk as models improve.

*Human Passport provides a modular, privacy-preserving proof of personhood infrastructure. Part of human.tech*